One of the biggest areas of confusion with the VMware Cloud on AWS service is the networking. Honestly I can see why as I remember my first meetings with engineering and it was a bit overwhelming. having spent the last year working with several customers, I now believe I have a fairly easy explanation for how the networking works. I do have to say this is was not easy to accomplish from an engineering perspective so I think we all need to give massive respect to the teams at both Amazon Web Services and VMware for what they accomplished. In this guide I will do my best to describe the networking in VMware Cloud on AWS in simple terms. This is not meant to be a technical deep dive or a reference document. This is just a way to better understand how the service works.

Let’s start at the very beginning…

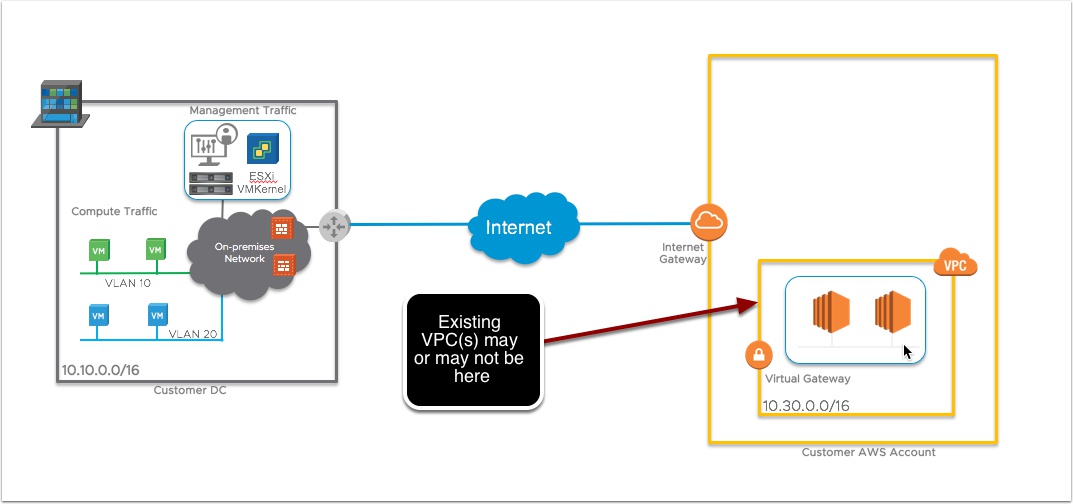

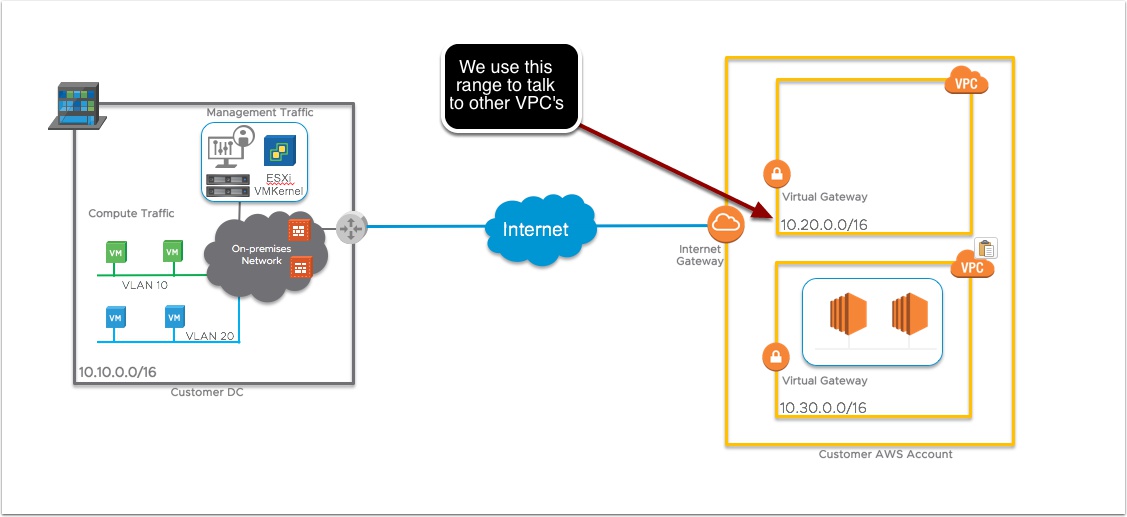

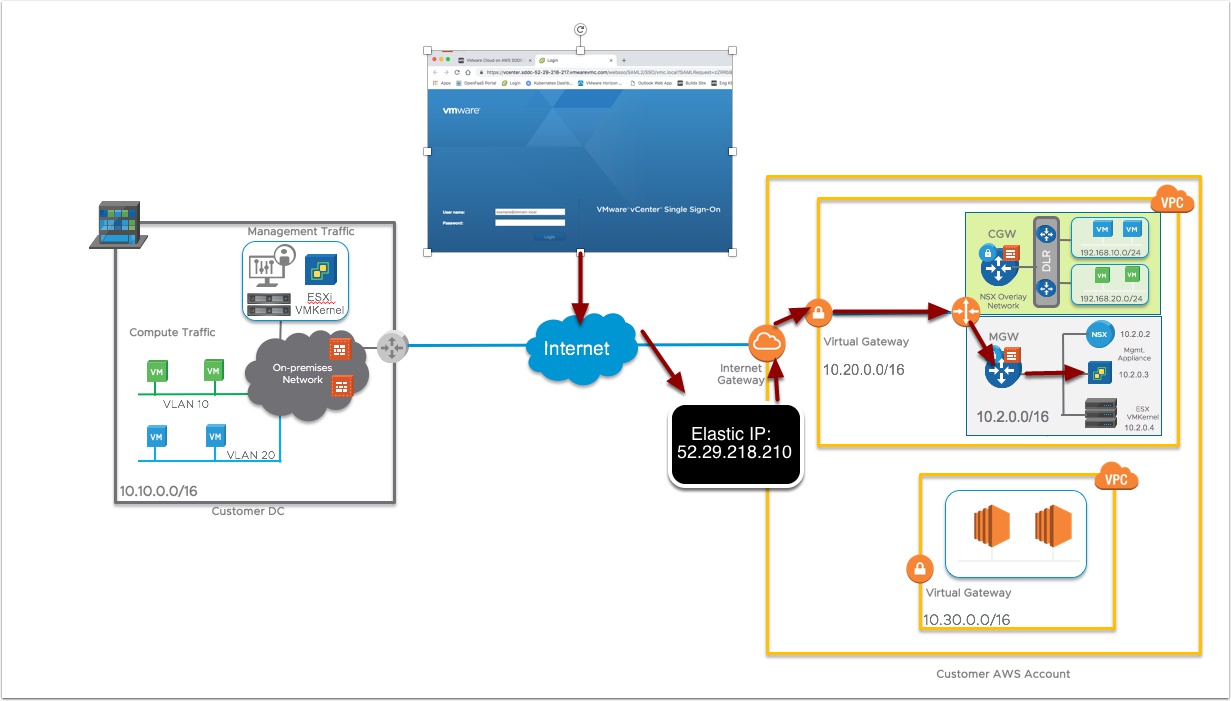

This represents a typical customer scenario. Some vSphere on-Prem, and an AWS account, customer may or may not already have an existing VPC. Keep in Mind to use the VMware Cloud on AWS service you do not need vSphere on-prem or an existing VPC, you do need your own AWS account though and you need to be registered on MyVMware.com

One of the requirements for deploying the VMware SDDC in AWS is for customer to own an AWS Account and then create an empty default VPC in the AWS Console

This AWS VPC needs a CIDR block assigned and at least one subnet created in one Availability Zone. NOTE: We will not use these addresses for the actuall SDDC components (Esxi hosts, vCenter, NSX Manager etc..)

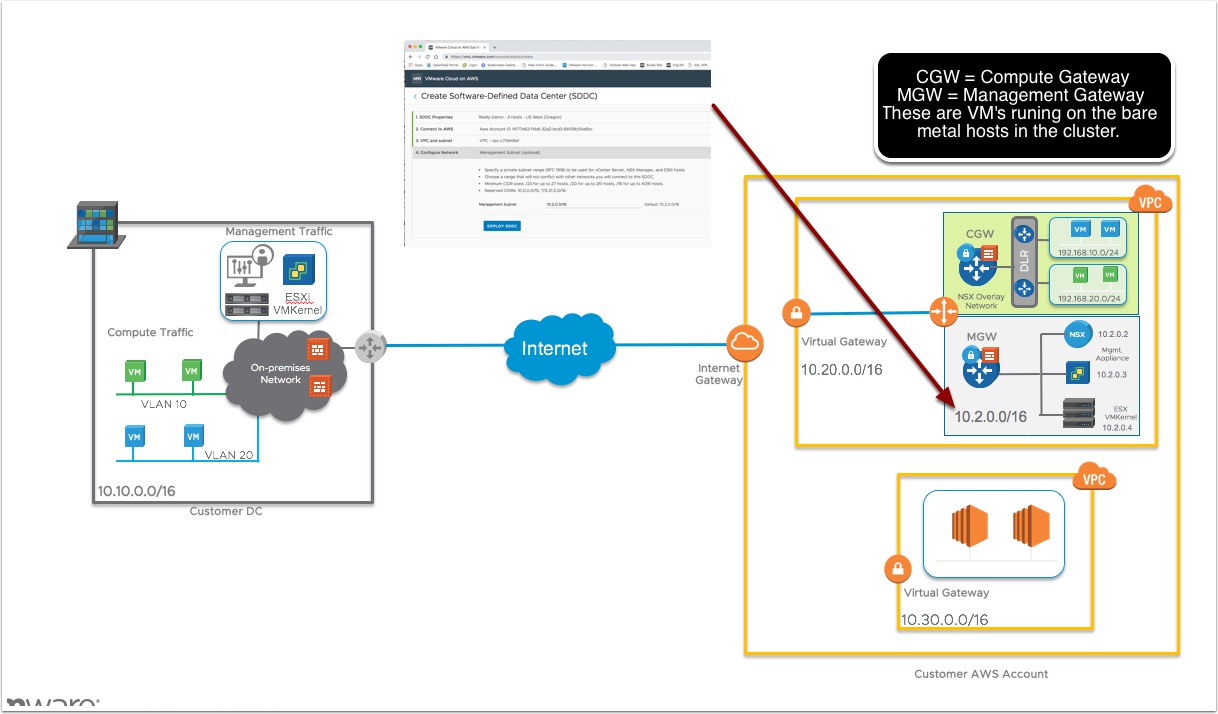

Now from the VMware Cloud Services portal we can provision the vSphere SDDC inside the AWS VPC we created earlier with your CIDR block you want to use for the SDDC components.

Essentially what we are doing is creating a VPC inside a VPC. So the AWS VPC is the shell VPC and inside that we are deploying NSX software defined networking to wire up the Bare Metal ESXi hosts, vCenter, vSAN and NSX manager components. We use the AWS VPC VIrtual Gateway to get in/out to the AWS network and customers other VPC’s and we use the internet gateway to get in/out to the internet. The reason we need a CIDR block is so we have a pool of IP addresses to assign to new hosts or virtual appliances you may add to your SDDC at some point. This should be a CIDR that is routable from your on-prem vCenter and ESXi hosts. So think of this like you are standing up a new colo or data center and you need to install a new cluster. What IP addresses would you get from your network team?

Ok smart guy, so now the SDDC is deployed, how do I access vCenter and login?

By default the built in NSX Firewalls are set to deny all, you need to go in the VMware Cloud Portal and allow vCenter over port 443 via the internet. We will also assign a Public Internet via Amazon Elastic IP that will be setup to NAT into the vCenter server. Once we test everything is working we will then go to setup a VPN or a Direct Connect to access the SDDC over a secure connection.

Well that makes sense now, but we don’t want our vCenter on the internet, what options do you have?

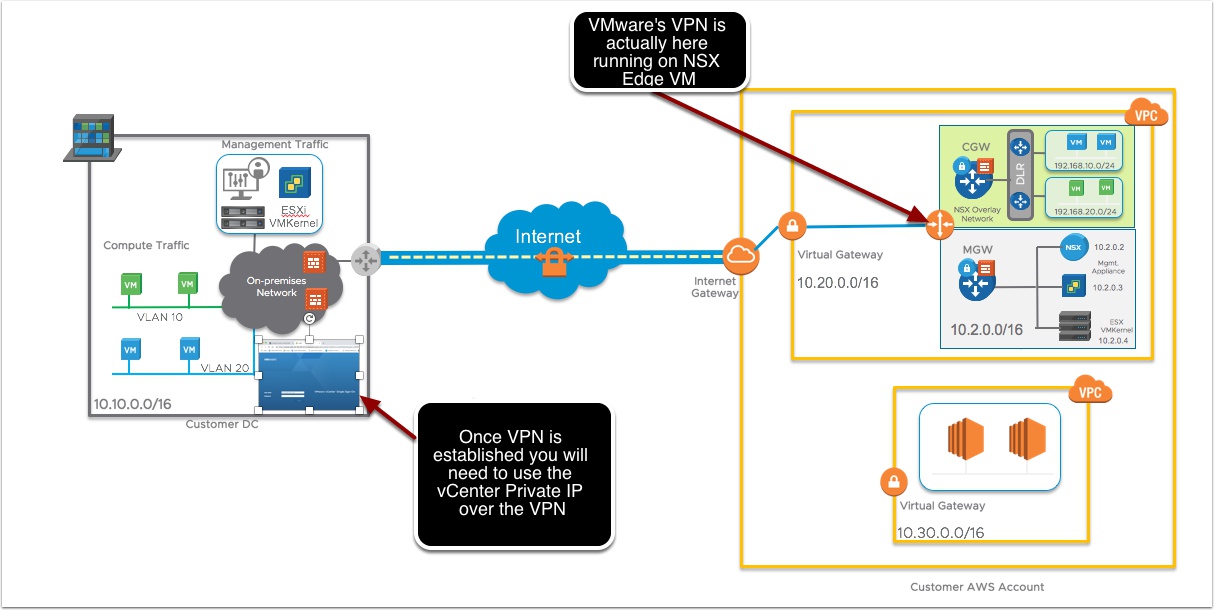

Option 1: L3 VPN over the internet – We support both Routed and Policy Based VPN connection

When you create the VPN in the VMware Cloud on AWS console we also create a public facing IP using Amazon Elastic IP address, that is NAT’ed into the Management Gateway appliance

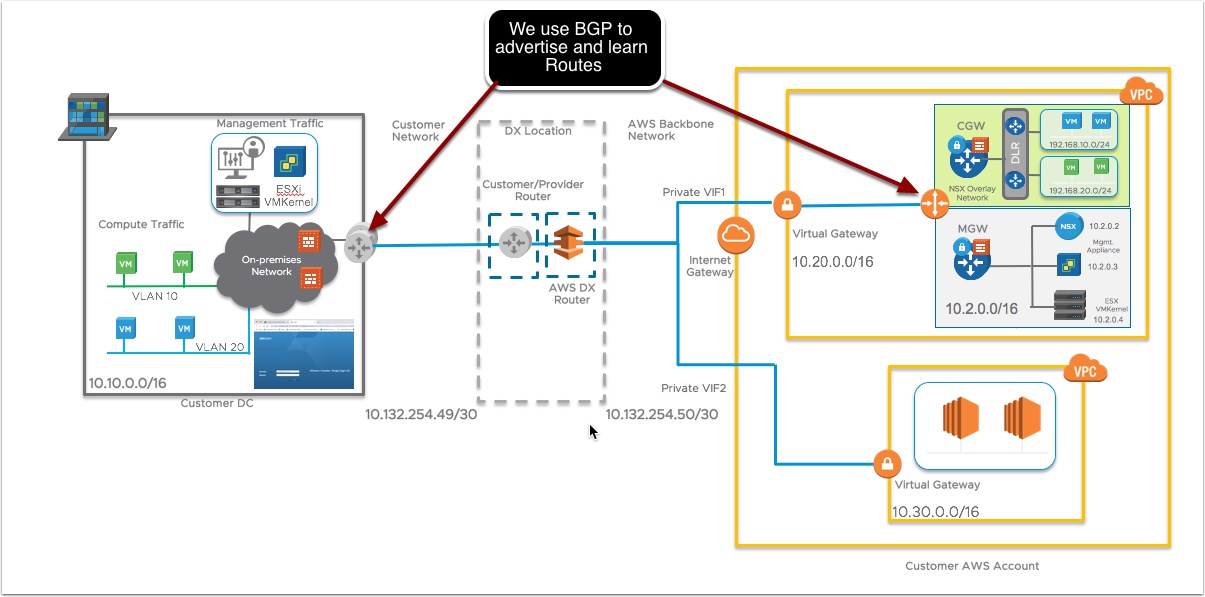

Option 2: Amazon Web Services Direct Connection

Clear as Mud? Great, if not leave comments below or ping me on twitter @vmtocloud

Next up learn how HCX works and Live Migration to/from VMware Cloud on AWS –> Here

Pingback: HCX for VMware Cloud on AWS for Newbies | | VMtoCloud.com